VerifiMind™ PEAS

A Validation-First Methodology for Ethical and Secure Application Development

Transform your vision into validated, ethical, secure applications through systematic multi-model AI orchestration — from concept to deployment, with human-centered wisdom validation.

🎉 MCP LIVE: Production Deployed

VerifiMind PEAS is now live and accessible across multiple platforms:

| Platform | Type | Access | Status |

|---|---|---|---|

| GCP Cloud Run | Production API | verifimind.ysenseai.org | ✅ LIVE |

| Official MCP Registry | Registry Listing | registry.modelcontextprotocol.io | ✅ LISTED |

| Smithery.ai | Native MCP | Install for Claude Desktop | ✅ LIVE |

| Hugging Face | Interactive Demo | YSenseAI/verifimind-peas | ✅ LIVE |

Quick Start

Claude Code (Copy & Paste to Settings > MCP Servers):

{

"mcpServers": {

"verifimind-genesis": {

"url": "https://verifimind.ysenseai.org/mcp",

"transport": "http-sse"

}

}

}

Claude Desktop (Edit config file):

- macOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json

Cursor (Add to settings.json):

{

"mcp.servers": {

"verifimind-genesis": {

"url": "https://verifimind.ysenseai.org/mcp",

"transport": "http-sse"

}

}

}

One-Click Setup Script:

curl -fsSL https://raw.githubusercontent.com/creator35lwb-web/VerifiMind-PEAS/main/scripts/setup-mcp.sh | bash

📖 Full Setup Guide | 🎮 Interactive Demo

API Keys

| Platform | API Key Required | Notes |

|---|---|---|

| GCP Server / MCP Registry | ❌ No | Server-side configured, ready to use |

| HuggingFace Demo | ❌ No | Server-side configured |

| Smithery | ✅ Yes (BYOK) | Bring Your Own Key |

For Smithery users: Configure your own LLM API key (Gemini FREE or Groq FREE recommended):

{

"mcpServers": {

"verifimind-genesis": {

"url": "https://smithery.ai/server/creator35lwb-web/verifimind-genesis",

"config": {

"llm_provider": "gemini",

"gemini_api_key": "YOUR_API_KEY_HERE"

}

}

}

}

Get FREE API Keys: Google AI Studio | Groq Console

🌟 What is VerifiMind-PEAS?

VerifiMind-PEAS is a methodology framework, not a code generation platform.

We provide a systematic approach to multi-model AI validation that ensures your applications are:

- ✅ Validated through diverse AI perspectives

- ✅ Ethical with built-in wisdom validation

- ✅ Secure with systematic vulnerability assessment

- ✅ Human-centered with you as the orchestrator

What We Provide

Core Methodology:

- ✅ Genesis Methodology: Systematic 5-step validation process

- ✅ X-Z-CS RefleXion Trinity: Specialized AI agents (Innovation, Ethics, Security)

- ✅ Genesis Master Prompts: Stateful memory system for project continuity

- ✅ Comprehensive Documentation: Guides, tutorials, case studies

Integration Support:

- ✅ Works with any LLM (Claude, GPT, Gemini, Kimi, Grok, Qwen, etc.)

- ✅ Integration guides for Claude Code, Cursor, and generic LLMs

- ✅ No installation required - just read and apply!

What We Do NOT Provide

We are NOT:

- ❌ A code generation platform

- ❌ A web interface for application scaffolding

- ❌ A no-code platform integration

- ❌ An automated deployment system

We ARE:

- ✅ A methodology you apply with your existing AI tools

- ✅ A framework for systematic validation

- ✅ A community of practice for ethical AI development

🎯 Latest Achievements: Standardization Protocol v1.0

December 2025 - We've completed a major iteration implementing production-grade standardization and generating 57 complete Trinity validation reports as proof of methodology.

What We Built

VerifiMind-PEAS now includes a production-ready MCP (Model Context Protocol) server with standardized multi-provider support. This iteration transformed our methodology from concept to validated, reproducible system.

Standardization Protocol v1.0 provides reproducible, cost-efficient validation through multi-provider orchestration. By combining Gemini's free tier for innovation analysis with Claude for ethics and security validation, we achieved sustainable costs (~$0.003 per validation) while maintaining research-grade quality.

57 Trinity Validation Reports demonstrate our methodology at scale across seven domains including financial services, healthcare, education, and civic technology. Each report provides complete Trinity analysis with detailed reasoning, actionable recommendations, and traceable performance metrics. The 65% veto rate confirms our ethical safeguards work as designed, with Z Agent consistently identifying concepts that cross ethical red lines.

MCP Server Integration delivers a unified interface across three LLM providers (Gemini, Claude, OpenAI) with built-in metrics tracking, retry logic, and Trinity synthesis. The server is production-ready for integration with Claude Desktop and other MCP-compatible tools.

Key Metrics

| Metric | Value | Significance |

|---|---|---|

| Validation Reports | 57 | Proof of methodology at scale |

| Success Rate | 95% | Reliable, production-ready system |

| Cost per Validation | ~$0.003 | Sustainable for solo developers |

| Average Duration | 18.6s | Fast enough for iterative workflows |

| Veto Rate | 65% | Strong ethical safeguards working |

Iteration Journey

Our development followed a systematic progression from vision to validation. The methodology design phase (2024) established the X-Z-CS RefleXion Trinity concept and Genesis Master Prompts framework. Initial implementation (early 2025) built the MCP server with basic agent structure and generated initial validation reports. The current standardization phase (December 2025) solved API reliability issues, reduced costs by 94%, and validated the methodology with 57 real-world concepts.

This iteration demonstrates our commitment to dogfooding—we use our own methodology to improve itself. All code, reports, and learnings are public on GitHub, enabling community-driven continuous improvement.

View 57 Complete Trinity Validation Reports →

💡 Why VerifiMind-PEAS?

The Problem: Single-Model Bias

Most AI development relies on a single model (e.g., only Claude, only GPT).

This creates:

- 🔴 Single-model bias: One perspective, blind spots

- 🔴 Inconsistent quality: No systematic validation

- 🔴 Ethical gaps: No wisdom validation

- 🔴 Security vulnerabilities: No systematic security review

The Solution: Multi-Model Orchestration

VerifiMind-PEAS orchestrates multiple AI models for diverse perspectives:

- X Intelligent Agent (Innovation): Generates creative solutions

- Z Guardian Agent (Ethics): Validates ethical alignment

- CS Security Agent (Security): Identifies vulnerabilities

By synthesizing diverse AI perspectives under human direction, you achieve:

- ✅ Objective validation: Multiple models check each other

- ✅ Ethical alignment: Wisdom validation built-in

- ✅ Security assurance: Systematic vulnerability assessment

- ✅ Human-centered: You orchestrate, AI assists

Honest Positioning

Multi-model orchestration is not new. Developers have been using multiple AI models (Claude, GPT, Gemini) together for years. What makes VerifiMind-PEAS different is how we structure this orchestration through the X-Z-CS RefleXion Trinity and Genesis Master Prompts.

Our genuine novelty:

- ✅ X-Z-CS RefleXion Trinity: Specialized validation roles (Innovation, Ethics, Security) with no prior art found

- ✅ Genesis Master Prompts: Stateful memory system for project continuity across multi-model workflows

- ✅ Wisdom validation: Ethical alignment and cultural sensitivity as first-class concerns

- ✅ Human-at-center: You orchestrate (not just review), AI assists (not automates)

What we build on (established practices):

- Multi-model usage (common practice since 2023)

- Agent-based architectures (LangChain, AutoGen, CrewAI)

- Human-in-the-loop validation (industry standard)

Our contribution: Transforming ad-hoc multi-model usage into systematic validation methodology with wisdom validation and human-centered orchestration.

Competitive Positioning: Complementary, Not Competing

VerifiMind-PEAS operates as a validation layer ABOVE execution frameworks. We don't replace LangChain, AutoGen, or CrewAI — we complement them.

Think of it this way:

- Execution frameworks (LangChain, AutoGen, CrewAI): "How to build and run AI agents"

- VerifiMind-PEAS: "How to validate what those agents produce"

Comparison Table:

| Framework | Layer | Focus | Human Role | VerifiMind-PEAS Relationship |

|---|---|---|---|---|

| LangChain | Execution | Tool integration, chains | In-loop (reviewer) | Validates LangChain outputs for ethics + security |

| AutoGen | Execution | Multi-agent automation | In-loop (supervisor) | Validates AutoGen conversations for wisdom alignment |

| CrewAI | Execution | Role-based agents | In-loop (manager) | Validates CrewAI results for cultural sensitivity |

| OpenAI Swarm | Execution | Lightweight handoffs | In-loop (router) | Provides memory layer via Genesis Master Prompts |

| VerifiMind-PEAS | Validation | Wisdom validation | At-center (orchestrator) | Validation layer above all execution frameworks |

Industry focus: Code execution, task automation, agent coordination

VerifiMind-PEAS focus: Wisdom validation, ethical alignment, human-centered orchestration

Result: Use VerifiMind-PEAS with LangChain/AutoGen/CrewAI to add validation layer. We complement, not compete.

🔄 How It Works: The Genesis Methodology

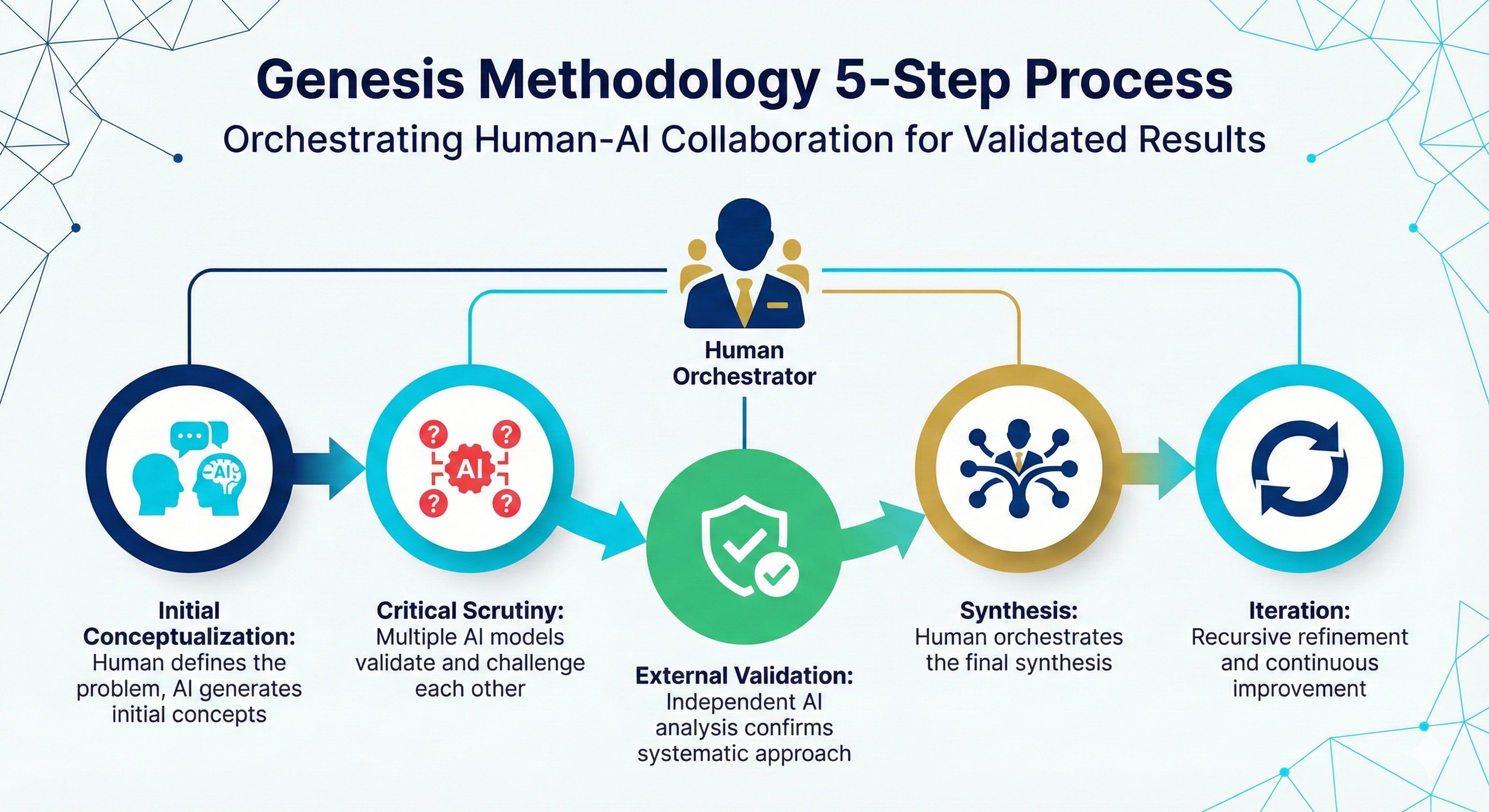

The Genesis Methodology is a systematic 5-step process for multi-model AI validation:

Step 1: Initial Conceptualization

- Human defines the problem or vision

- AI (X Intelligent Agent) generates initial concepts and solutions

- Output: Initial concept with creative possibilities

Step 2: Critical Scrutiny

- AI (Z Guardian Agent) validates ethical alignment

- AI (CS Security Agent) identifies security vulnerabilities

- Multiple models challenge and validate each other

- Output: Validated concept with ethical and security considerations

Step 3: External Validation

- Independent AI analysis confirms systematic approach

- Research validates against academic literature and industry best practices

- Output: Externally validated concept with evidence

Step 4: Synthesis

- Human orchestrates final synthesis

- Human makes decisions based on AI perspectives

- Human documents decisions in Genesis Master Prompt

- Output: Final decision with documented rationale

Step 5: Iteration

- Recursive refinement based on feedback

- Continuous improvement through multiple cycles

- Genesis Master Prompt updated with learnings

- Output: Refined concept ready for next phase

This process ensures every output is validated through diverse AI perspectives before final human approval.

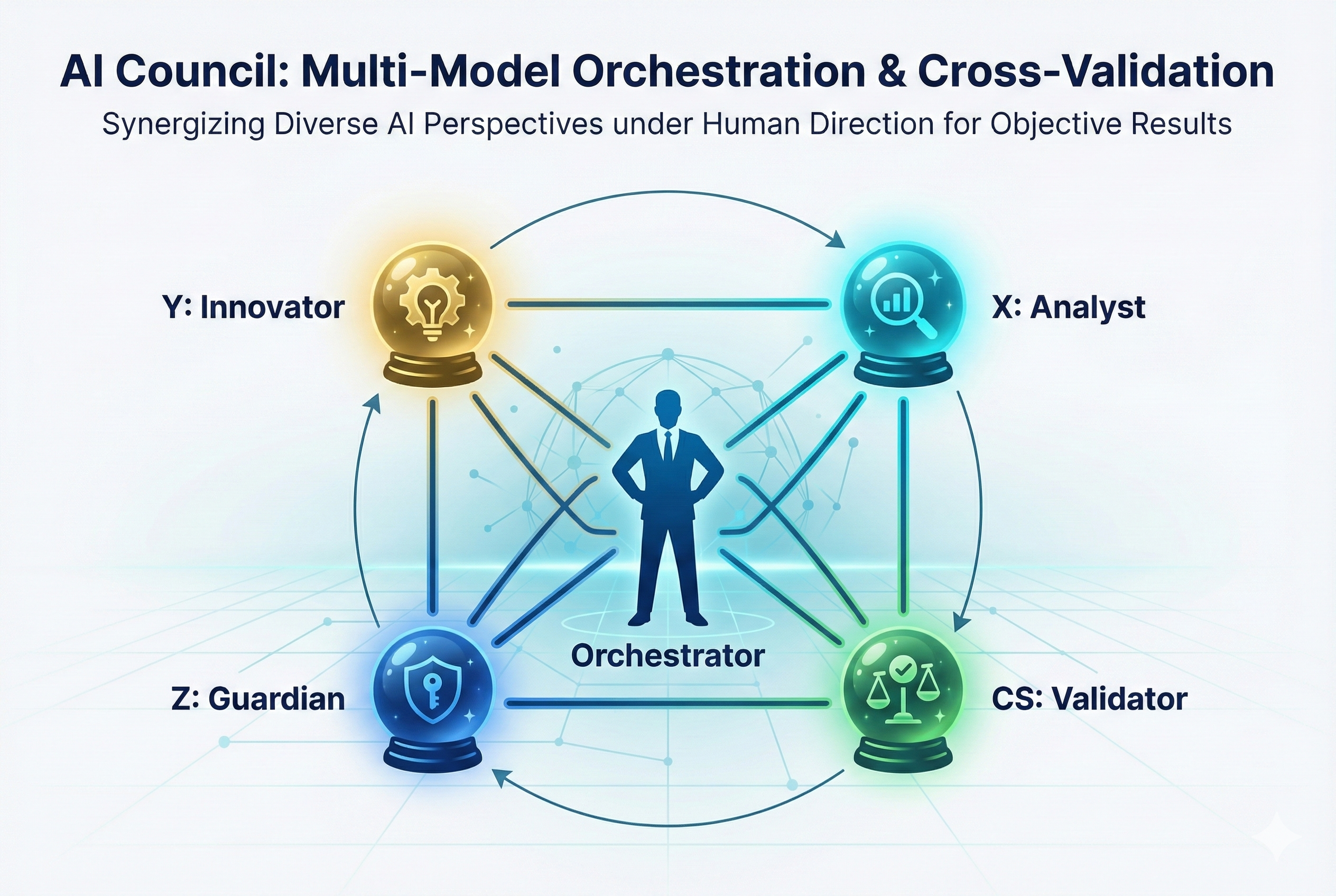

🏗️ Architecture: The X-Z-CS RefleXion Trinity

VerifiMind-PEAS implements a multi-model orchestration architecture where:

Human Orchestrator (You)

- Role: Center of decision-making

- Responsibility: Synthesize AI perspectives, make final decisions

- Tools: Genesis Master Prompts, integration guides

X Intelligent Agent (Analyst/Researcher)

- Role: Market intelligence and feasibility analysis

- Focus: Research, technical feasibility, market analysis

- Models: Perplexity, GPT-4, Gemini (research-focused)

- Note: X agent focuses on analytical research and validation

Z Guardian Agent (Ethics)

- Role: Compliance and human-centered design protector

- Focus: Ethical alignment, cultural sensitivity, accessibility

- Models: Claude, GPT-4 (ethics-focused)

CS Security Agent (Security)

- Role: Cybersecurity protection layer

- Focus: Vulnerability assessment, threat modeling, security best practices

- Models: GPT-4, Claude (security-focused)

This architecture synergizes diverse AI perspectives under human direction for objective, validated results.

About Y Agent (Innovator)

You may see Y Agent (Innovator) in some diagrams. This agent is part of the broader YSenseAI™ project, which focuses on innovation and strategic vision. The complete ecosystem includes:

- Y Agent (YSenseAI™): Innovation and creative ideation

- X Agent (VerifiMind-PEAS): Research and analytical validation

- Z Agent (VerifiMind-PEAS): Ethical compliance

- CS Agent (VerifiMind-PEAS): Security validation

VerifiMind-PEAS focuses on the X-Z-CS Trinity (Research, Ethics, Security), while YSenseAI™ provides the Y Agent (Innovation). Together, they form a complete validation framework.

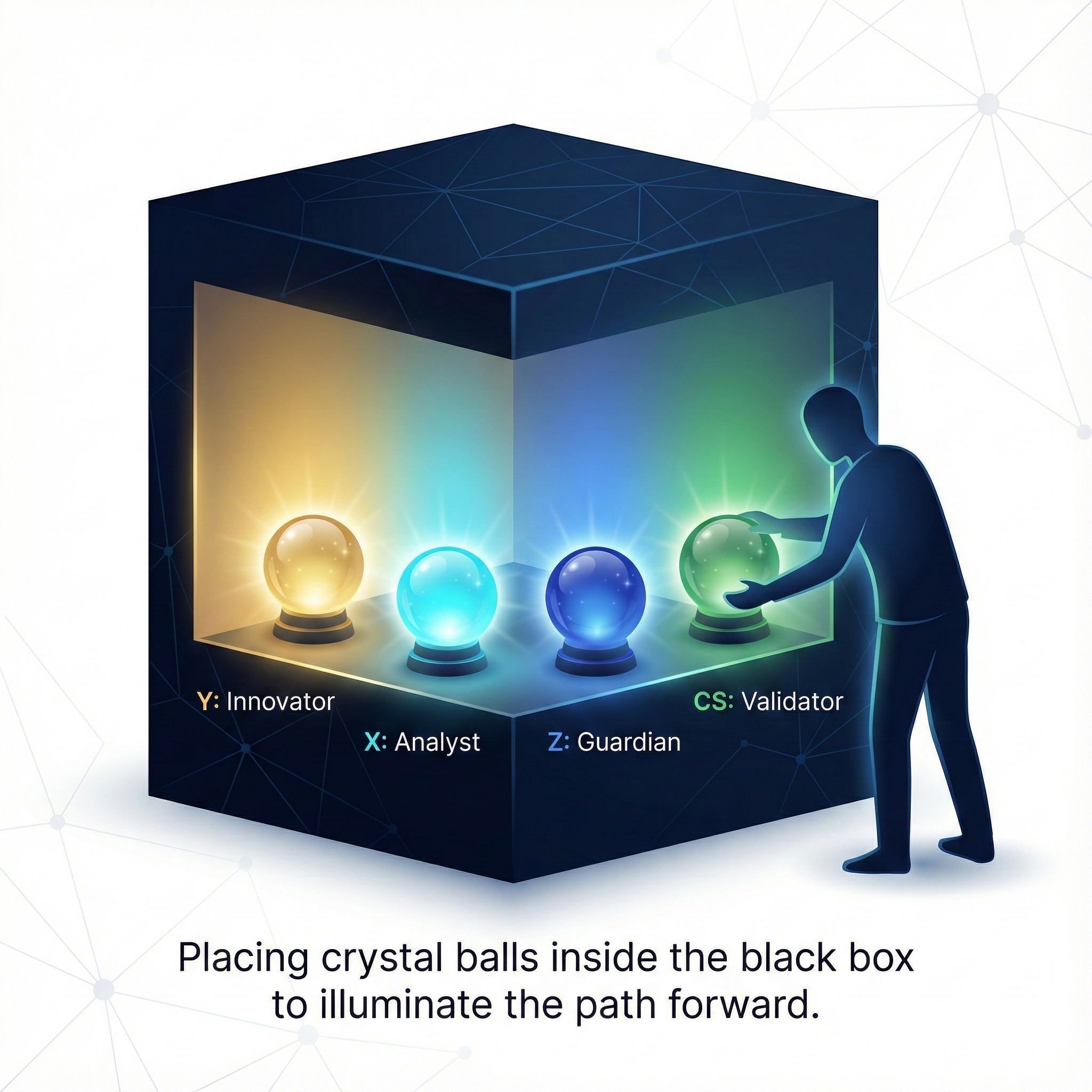

💡 The Concept: Crystal Balls Inside the Black Box

Instead of treating AI as an opaque "black box," VerifiMind-PEAS places multiple "crystal balls" (diverse AI models) inside the box to illuminate the path forward.

Each crystal ball represents a specialized AI agent with a unique perspective:

- Y (Innovator): Generates creative concepts and strategic vision (from YSenseAI™)

- X (Analyst): Researches feasibility and market intelligence (from VerifiMind-PEAS)

- Z (Guardian): Ensures ethical compliance and safety (from VerifiMind-PEAS)

- CS (Validator): Validates claims against external evidence and security best practices (from VerifiMind-PEAS)

Note: The diagram shows the complete 4-agent system (Y-X-Z-CS). VerifiMind-PEAS specifically implements the X-Z-CS Trinity, while Y Agent comes from YSenseAI™.

By orchestrating these diverse perspectives under human direction, we achieve objective, validated results that no single AI model can provide.

🚀 Getting Started

No Installation Required!

VerifiMind-PEAS is a methodology, not software. You don't need to install anything!

Step 1: Read the Genesis Master Prompt Guide

Start here: Genesis Master Prompt Guide

This comprehensive guide teaches you:

- What is a Genesis Master Prompt?

- Why Genesis Master Prompts matter

- Step-by-step tutorial (meditation app example)

- Real-world validation (87-day journey)

- Advanced techniques

- Common mistakes and solutions

Time: 30 minutes to read, lifetime of value

Step 2: Choose Your AI Tool

VerifiMind-PEAS works with any LLM:

- ✅ Claude (Anthropic)

- ✅ GPT-4 (OpenAI)

- ✅ Gemini (Google)

- ✅ Kimi (Moonshot AI)

- ✅ Grok (xAI)

- ✅ Qwen (Alibaba)

- ✅ Any other LLM

Recommended: Use at least 2-3 LLMs for multi-model validation.

Step 3: Follow Integration Guides

Choose your integration approach:

-

- Paste GitHub repo URL → Claude applies methodology

- Best for: Code-focused projects

-

- Paste GitHub repo URL → Cursor applies methodology

- Best for: IDE-integrated development

-

- Copy-paste Genesis Master Prompt → Any LLM applies methodology

- Best for: Platform-agnostic approach

Step 4: Start Your First Project

Follow the tutorial in the Genesis Master Prompt Guide:

- Create your Genesis Master Prompt

- Start first session with X Intelligent Agent (innovation)

- Validate with Z Guardian Agent (ethics)

- Validate with CS Security Agent (security)

- Synthesize perspectives and make decision

- Update Genesis Master Prompt

- Repeat!

Example projects:

- Meditation timer app (tutorial example)

- AI-powered attribution system (YSenseAI™)

- Multi-model validation framework (VerifiMind-PEAS itself)

💻 Reference Implementation (Optional)

VerifiMind-PEAS is a methodology framework that can be applied with any LLM or tool. You do NOT need code to use VerifiMind-PEAS.

However, for developers who want to see a complete implementation or need a starter template, we provide a Python reference implementation.

What's Included

The reference implementation demonstrates how to automate the X-Z-CS Trinity:

- X Intelligent Agent: Innovation engine for business viability analysis

- Z Guardian Agent: Ethical compliance validation (GDPR, UNESCO AI Ethics)

- CS Security Agent: Security validation with Socratic questioning engine (Concept Scrutinizer)

- Orchestrator: Multi-agent coordination and conflict resolution

- PDF Report Generator: Audit trail documentation

Status: 85% production-ready (Phase 1-2 complete, Phase 3-6 in progress)

Three Ways to Use VerifiMind-PEAS

Option 1: Apply Methodology Manually (No code required)

- Use Genesis Master Prompts with your preferred LLM

- Follow integration guides (Claude Code, Cursor, Generic LLM)

- Orchestrate X-Z-CS validation yourself

- Best for: Non-technical users, custom workflows

Option 2: Use Reference Implementation (Python developers)

- Clone repository:

git clone https://github.com/creator35lwb-web/VerifiMind-PEAS - Install dependencies:

pip install -r requirements.txt - Run validation:

python verifimind_complete.py --idea "Your app idea" - Best for: Developers who want automation, learning how X-Z-CS works

Option 3: Extend Reference Implementation (Contributors)

- Fork repository and add new agents, validation engines, or integrations

- Submit pull request to share with community

- Best for: Researchers, advanced developers, open-source contributors

Documentation

- Code Foundation Completion Summary: Current implementation status (85% complete)

- Code Foundation Analysis: Technical architecture and design decisions

- Requirements: Python dependencies

Important Notes

The reference implementation is:

- ✅ A learning resource (see how methodology translates to code)

- ✅ A starter template (fork and customize for your needs)

- ✅ A validation proof (shows methodology is executable)

The reference implementation is NOT:

- ❌ A required component (you can apply methodology without code)

- ❌ A production-ready SaaS (this is a reference, not a hosted service)

- ❌ The only way to implement (you can use other languages, tools, approaches)

Remember: VerifiMind-PEAS is a methodology framework. The code is ONE way to implement it, not THE way.

📚 Documentation

Core Methodology

- Genesis Methodology White Paper v1.1: Comprehensive academic documentation

- Genesis Master Prompt Guide: Practical implementation guide

- X-Z-CS RefleXion Trinity Master Prompts: Specialized agent prompts (Chinese)

Integration Guides

Documentation Best Practices

VerifiMind-PEAS includes a comprehensive documentation framework for managing context across multi-model LLM workflows.

Three-Layer Architecture:

- Genesis Master Prompt (Project Memory) - Single source of truth, updated after every session

- Module Documentation (Deep Context) - Feature-specific details organized in

/docs - Session Notes (Iteration History) - Complete audit trail of decisions and insights

Why This Matters:

- ✅ Context persistence across LLM sessions (no manual re-entry)

- ✅ Platform-agnostic (works with Claude, GPT, Gemini, Kimi, Grok, Qwen, etc.)

- ✅ Multi-model workflows (consistent context for X-Z-CS validation)

- ✅ Complete audit trail (track every decision and iteration)

Learn more: Documentation Best Practices Guide

Templates:

Case Studies

- YSenseAI™ 87-Day Journey (Landing Pages): Real-world validation of Genesis Methodology

- VerifiMind-PEAS Development (Landing Pages): Meta-application of methodology to itself

Additional Resources

- Roadmap: Strategic development plan

- Contributing Guidelines: How to contribute

- Zenodo Publication Guide: Defensive publication documentation

🌍 Real-World Validation

87-Day Journey: YSenseAI™ + VerifiMind-PEAS

Creator: Alton Lee Wei Bin (creator35lwb)

Duration: 87 days (September - November 2025)

Projects: YSenseAI™ (AI attribution infrastructure) + VerifiMind-PEAS (validation methodology)

Challenges:

- Solo builder with non-tech background

- Multiple LLMs (Kimi, Claude, GPT, Gemini, Qwen, Grok)

- Hundreds of conversations across 87 days

- Complex technical and philosophical concepts

Results:

- ✅ YSenseAI™: Fully documented AI attribution infrastructure

- ✅ VerifiMind-PEAS: Complete methodology framework with white paper

- ✅ Defensive Publication: DOI 10.5281/zenodo.17645665

- ✅ Zero context loss: Genesis Master Prompts maintained continuity

Key Insights:

- Genesis Master Prompts scale: Started with 1 page, grew to 50+ pages

- Multi-model validation works: Different LLMs provided complementary perspectives

- Human-at-center is critical: AI provides perspectives, human synthesizes and decides

- Iteration is key: Continuous refinement through 87 days led to success

Read the full case study: YSenseAI™ 87-Day Journey

🤝 Community

Join the Discussion

- GitHub Discussions: Ask questions, share insights, collaborate

- Twitter/X: Follow updates and announcements

- Email: Direct contact for inquiries

How to Contribute

We welcome contributions from the community!

Ways to contribute:

- 📝 Share case studies: Document your experience using VerifiMind-PEAS

- 🌍 Translate documentation: Help make VerifiMind-PEAS accessible globally

- 💬 Answer questions: Help others in GitHub Discussions

- 🐛 Report issues: Identify unclear documentation or gaps

- 🎓 Create tutorials: Share your learning journey

Read more: Contributing Guidelines

🗺️ Roadmap

Current Phase: Phase 5 - Community Building & Adoption (December 2025)

Status: Phases 1-4 COMPLETE ✅ | MCP LIVE 🎉

Phase 1: Methodology Framework ✅ COMPLETE

Completed:

- ✅ Genesis Methodology White Paper v2.0 (DOI: 10.5281/zenodo.17972751)

- ✅ Defensive publication (DOI: 10.5281/zenodo.17645665)

- ✅ X-Z-CS RefleXion Trinity master prompts

- ✅ Genesis Master Prompt Guide

- ✅ Integration guides (Claude Code, Cursor, Generic LLM)

Phase 2: MCP Server Implementation ✅ COMPLETE

Completed (December 21, 2025):

- ✅ All 4 core tools working (consult_x_agent, consult_z_agent, consult_cs_agent, validate_with_trinity)

- ✅ Multi-provider LLM support (Gemini, Groq, Anthropic, OpenAI)

- ✅ 57 real concept validations generated and published

- ✅ Z Agent veto power demonstrated (65% veto rate)

- ✅ Cost efficiency proven (~$0.003 per validation)

Phase 3: Production Deployment ✅ COMPLETE

Completed (December 2025):

- ✅ GCP Cloud Run deployed at verifimind.ysenseai.org

- ✅ Custom domain with SSL/TLS configured

- ✅ Health monitoring and production logging

- ✅ Docker containerization for reproducible deployments

Phase 4: Multi-Platform Distribution ✅ COMPLETE

Completed (December 25, 2025):

- ✅ Smithery.ai native MCP server (creator35lwb-web/verifimind-genesis)

- ✅ Hugging Face Space interactive demo (YSenseAI/verifimind-peas)

- ✅ TypeScript MCP server for native Smithery support

- ✅ Streamable HTTP transport for MCP protocol

Phase 5: Community Building & Adoption 🚧 CURRENT

In Progress:

- ⏳ GitHub Discussions community setup

- ⏳ Documentation and Wiki updates

- ⏳ Community engagement and feedback collection

- ⏳ Tutorial and guide creation

Future Phases 📋 PLANNED

- Phase 6: Feature Enhancement (Q1 2026) - Specialized domain agents, learning integration

- Phase 7: Enterprise Features (Q2 2026) - Team collaboration, audit logging

- Phase 8: Ecosystem Expansion (Q3 2026) - IDE extensions, API marketplace

Key Metrics:

| Metric | Value | Significance |

|---|---|---|

| Validation Reports | 57+ | Proof of methodology at scale |

| Platforms Live | 3 | GCP, Smithery, HuggingFace |

| Cost per Validation | ~$0.003 | Sustainable for all developers |

| Veto Rate | 65% | Strong ethical safeguards |

See Examples: /validation_archive/ | Examples

Read more: Roadmap

📖 Defensive Publication

Prior Art Established

VerifiMind-PEAS establishes prior art for the Genesis Prompt Engineering methodology and prevents others from patenting this approach to multi-model AI validation.

Published: November 19, 2025

DOI: 10.5281/zenodo.17645665

License: MIT License

Core Innovations:

- Genesis Methodology: Systematic 5-step multi-model validation process

- X-Z-CS RefleXion Trinity: Specialized AI agents (Innovation, Ethics, Security)

- Genesis Master Prompts: Stateful memory system for project continuity

- Human-at-Center Orchestration: Human as orchestrator (not reviewer)

Evidence of Prior Use:

- YSenseAI™: AI-powered attribution infrastructure (87-day development)

- VerifiMind-PEAS: Multi-model validation methodology framework

- Concept Scrutinizer (概念审思者): Socratic validation framework

Read more: Zenodo Publication Guide

📚 How to Cite

Citing VerifiMind-PEAS v1.1.0 (MCP Server)

If you use the VerifiMind-PEAS MCP server in your research or project, please cite:

APA Style:

Lee, A. (2025). VerifiMind-PEAS: Prompt Engineering Attribution System (Version 1.1.0) [Computer software]. GitHub. https://github.com/creator35lwb-web/VerifiMind-PEAS

BibTeX:

@software{verifimind_peas_v1_2025,

author = {Lee, Alton and {Manus AI} and {Claude Code}},

title = {VerifiMind-PEAS: Prompt Engineering Attribution System},

year = {2025},

version = {1.1.0},

url = {https://github.com/creator35lwb-web/VerifiMind-PEAS},

doi = {10.5281/zenodo.XXXXXXX},

note = {MCP server for multi-model AI validation}

}

IEEE Style:

A. Lee, Manus AI, and Claude Code, "VerifiMind-PEAS: Prompt Engineering Attribution System," Version 1.1.0, GitHub, 2025. [Online]. Available: https://github.com/creator35lwb-web/VerifiMind-PEAS

Citing Genesis Methodology v2.0 (Methodology)

If you use or reference the Genesis Prompt Engineering Methodology, please cite:

APA Style:

Lee, A., & Manus AI. (2025). Genesis Prompt Engineering Methodology v2.0: Multi-Agent AI Validation Framework (Version 2.0.0) [Methodology]. Zenodo. https://doi.org/10.5281/zenodo.17972751

BibTeX:

@misc{genesis_v2_2025,

author = {Lee, Alton and {Manus AI}},

title = {Genesis Prompt Engineering Methodology v2.0: Multi-Agent AI Validation Framework},

year = {2025},

version = {2.0.0},

url = {https://doi.org/10.5281/zenodo.17972751},

doi = {10.5281/zenodo.17972751},

note = {Validated through 87-day production development, 21,356 words}

}

IEEE Style:

A. Lee and Manus AI, "Genesis Prompt Engineering Methodology v2.0: Multi-Agent AI Validation Framework," Version 2.0.0, Zenodo, 2025. [Online]. Available: https://doi.org/10.5281/zenodo.17972751

GitHub Citation

GitHub provides automatic citation support. Click the "Cite this repository" button on the repository page to get formatted citations in APA and BibTeX formats.

DOI Badges

Note: DOI badges will be updated after Zenodo registration is complete.

Release Information

VerifiMind-PEAS v1.1.0:

- Release Date: December 18, 2025

- Tag:

verifimind-v1.1.0 - Release Notes: RELEASE_NOTES_V1.1.0.md

- Status: Production-ready, deployment-ready for Smithery marketplace

Genesis Methodology v2.0:

- Release Date: December 18, 2025

- Tag:

genesis-v2.0 - Release Notes: RELEASE_NOTES_GENESIS_V2.0.md

- Status: Production-validated through 87-day development journey

📜 License

Open Source License (MIT)

VerifiMind-PEAS is released under the MIT License for personal, educational, and open-source use.

Copyright (c) 2025 Alton Lee Wei Bin (creator35lwb)

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

Commercial License

For enterprises requiring additional features, support, and legal protections, we offer commercial licensing options:

- 🏢 Enterprise Deployment: Production environments with SLA requirements

- 🔒 Proprietary Extensions: Building proprietary features on top of the framework

- 📞 Priority Support: Dedicated support channels and guaranteed response times

- 🛡️ Indemnification: Legal protection and IP indemnification

- 📊 Compliance: Audit trails and compliance reports for regulated industries

Read more: Commercial License

™️ Trademark Notice

The following are trademarks of Alton Lee:

- VerifiMind™ - Primary brand

- Genesis Methodology™ - Validation methodology

- RefleXion Trinity™ - X-Z-CS agent architecture

Usage Guidelines:

- ✅ Use freely for personal and educational purposes

- ✅ Reference in documentation and discussions

- ❌ Do not use in product names without permission

- ❌ Do not imply official endorsement without permission

Forks and derivatives may use the open-source code under MIT license, but must use different branding.

📞 Contact

General Inquiries: creator35lwb@gmail.com

Twitter/X: @creator35lwb

GitHub Discussions: Join discussions

Domain: verifimind.ysenseai.org (LIVE)

🙏 Acknowledgments

VerifiMind-PEAS was made possible by:

- Anthropic Claude: Ethics and safety validation

- OpenAI GPT-4: Technical implementation and analysis

- Google Gemini: Research and synthesis

- Moonshot AI Kimi: Innovation and creative insights

- xAI Grok: Alternative perspectives

- Alibaba Qwen: Multilingual support

Special thanks to:

- Open-source community: For inspiration and collaboration

- Early adopters: For feedback and validation

- Academic researchers: For theoretical foundations

Transform your vision into validated, ethical, secure applications.

Start with the Genesis Master Prompt Guide today! 🚀